Round 3 Carbanak/FIN7 results evaluation

Last month, the researchers at MITRE Engenuity released the results of their most recent ATT&CK Evaluation, offering businesses an opportunity to make informed choices about their own security needs. This year, by modeling the ATT&CK testing after attack methods deployed by the hacker groups Carbanak and FIN7, MITRE Engenuity’s newest evaluation sheds lights on how some of the most trusted cybersecurity solutions on the market fare when pitted against some of the most prolific and advanced attacker tactics and techniques to date.

These are the kinds of results that can make any business consider reevaluating its cybersecurity strategy, but before leaping to conclusions, companies should consider whether the results they’re reading are meaningful for their own situations.

For instance, the results are particularly interesting when you put them into the context of real-world environments and experience. As such, it’s critical that organizations without the in-house expertise of a SOC use solutions that are intuitive and effective: The barrage of security alerts can overwhelm, many IT and security teams aren’t going to be able to easily identify the ones that matter, and the more time they spend in the data weeds, the less time they have to dedicate to growing the business. These organizations also may not be set up to tackle the complex configuration updates some products require to deliver quality results.

Thus, while the ATT&CK Evaluation results do reveal Endpoint Detection and Response (EDR) product scope—revealing how much these products detect in an environment—it is important to also evaluate both the quality (not just the quantity) of that data and how easily the results can be replicated and acted on by your team.

In their article “Winning MITRE ATT&CK, Losing Sight of Customers” Forrester analysts Jeff Pollard and Allie Mellendo explore this exact challenge, noting that “’domination’ of the results does not prove the tool will be effective given your infrastructure, your team, or your business goals,” and that the ATT&CK Evaluation is “focused on the TOOL.”

“It’s NOT focused on the experience,” Pollard and Mellendo said. “There are lots of great products poorly deployed, not deployed at all, misconfigured, or lacking the right visibility to be maximally effective.” To put this year’s ATT&CK Evaluation into context for our readers, we are going to listen to the experts—including Pollard and Mellen—and we are going to apply the framework created last year by former Forrester analyst Josh Zelonis to evaluate the Round 2 APT29 results (Zelonis has updated his framework for Round 3 in his GitHub repository).

Because of this, the graph we’re going to show you may not look like the graphs you’ve seen across the Internet. We understand that. But we think it is just as important to present you actionable information delivered out of the box as it is to present you true information. And, fairly, this applies to all the cybersecurity providers included in this year’s ATT&CK Evaluation.

With that in mind, we will also explain further below how we arrived at the following results:

Eliminating configuration changes

First, Zelonis’s framework discards mid-test configuration changes that improved detection capabilities.

During the test, vendors may choose to change a standard setting to better detect the attacker technique being tested. These revised configurations are likely not the default settings for customers because they’d result in too many alerts. It’s better not to have a detection rule in place you know will be noisy, generate false positives, and leave you scratching your head about what really matters in all that signal. Similarly, making these same configuration changes in-house is an unreasonable expectation of many customers. The vendors themselves had a team of experts on hand to review the results, determine the changes to make, and respond.

To map the test results to the needs and knowledge of many customers, we will discard any steps that were detected with a significant configuration change that affects the product’s detection capabilities. Now, we can better compare out-of-the-box product configuration and alert investigation experiences.

Determining alert quality

Alert quality is also critical when you want to quickly determine what you need to investigate and respond to. We suggest you use the following to help interpret the ATT&CK Evaluations results:

Security analytics

In this test detections can be any of the three types of alerts—General, Tactic, and Technique—and there is a hierarchy to these detection types.

The highest quality alerts are Techniques. They are where the real detail comes into play—where you know what you are dealing with, the specific steps taken, and what to investigate swiftly. For example, compare the following two alerts:

- “A PowerShell script executed”

- “T1041 – Exfiltration Over Command and Control Channel”

The latter provides precise, actionable details about what occurred— the theft of data—and how.

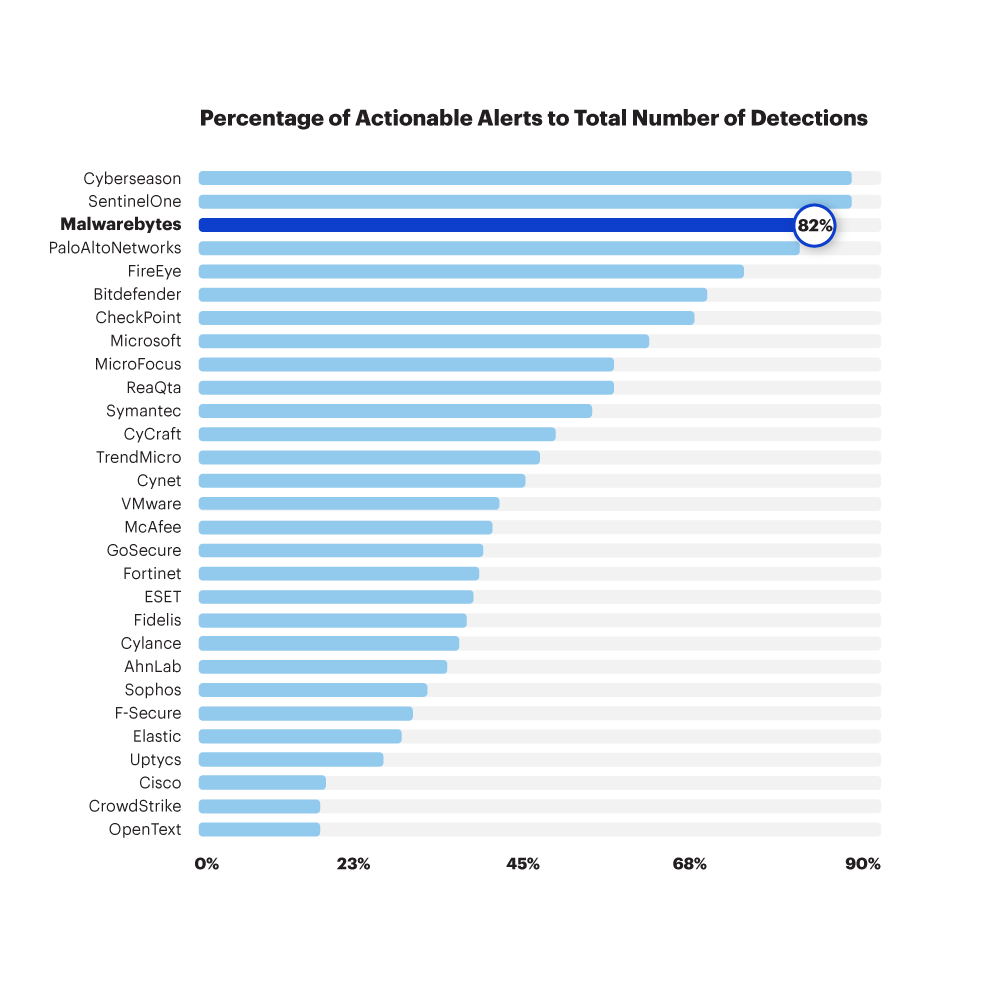

The more Techniques in the vendor’s results, the better the analytics capabilities of their EDR product and the swifter the investigation. Thus, we determine the quality of the alerts triggered by an EDR product by dividing the total number of Technique Alerts by the total number of Detections.

We strongly believe that small IT and security teams should prioritize alert quality over quantity when evaluating an EDR product, while enterprises and MSPs will also benefit from enriching their SOC data with greater context.

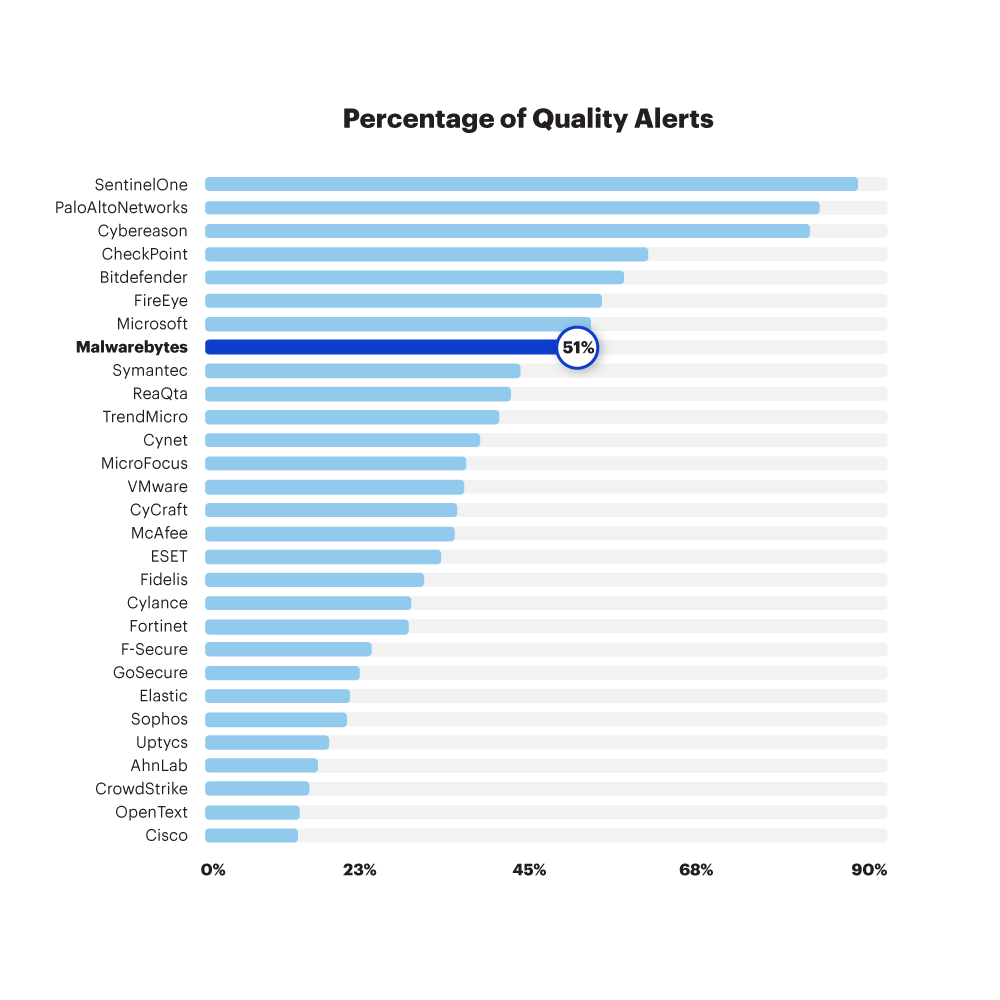

Quality rate

EDR vendors should strive for quality alerts out of the box, but they also have to trigger enough quality alerts for IT and security teams to have that all-important level of detail about every action that attackers have taken. To complete this perspective of the data, then, we define Quality Rate by dividing the total number of Technique alerts by the total number of steps during the test. Was there a quality alert for each step?

Getting the complete picture

One last consideration is worthwhile. EDR is an essential endpoint security strategy today, but endpoint protection—the prevention side of the story—also plays a critical role, and even more so for those who aren’t looking to hire or invest in an incident response team. Just as reducing the noise helps you zero in on alerts that matter, reducing the attack surface—assessing vulnerabilities and securing weak points in your defenses—helps you limit the threats that get through so you can more easily respond to them.

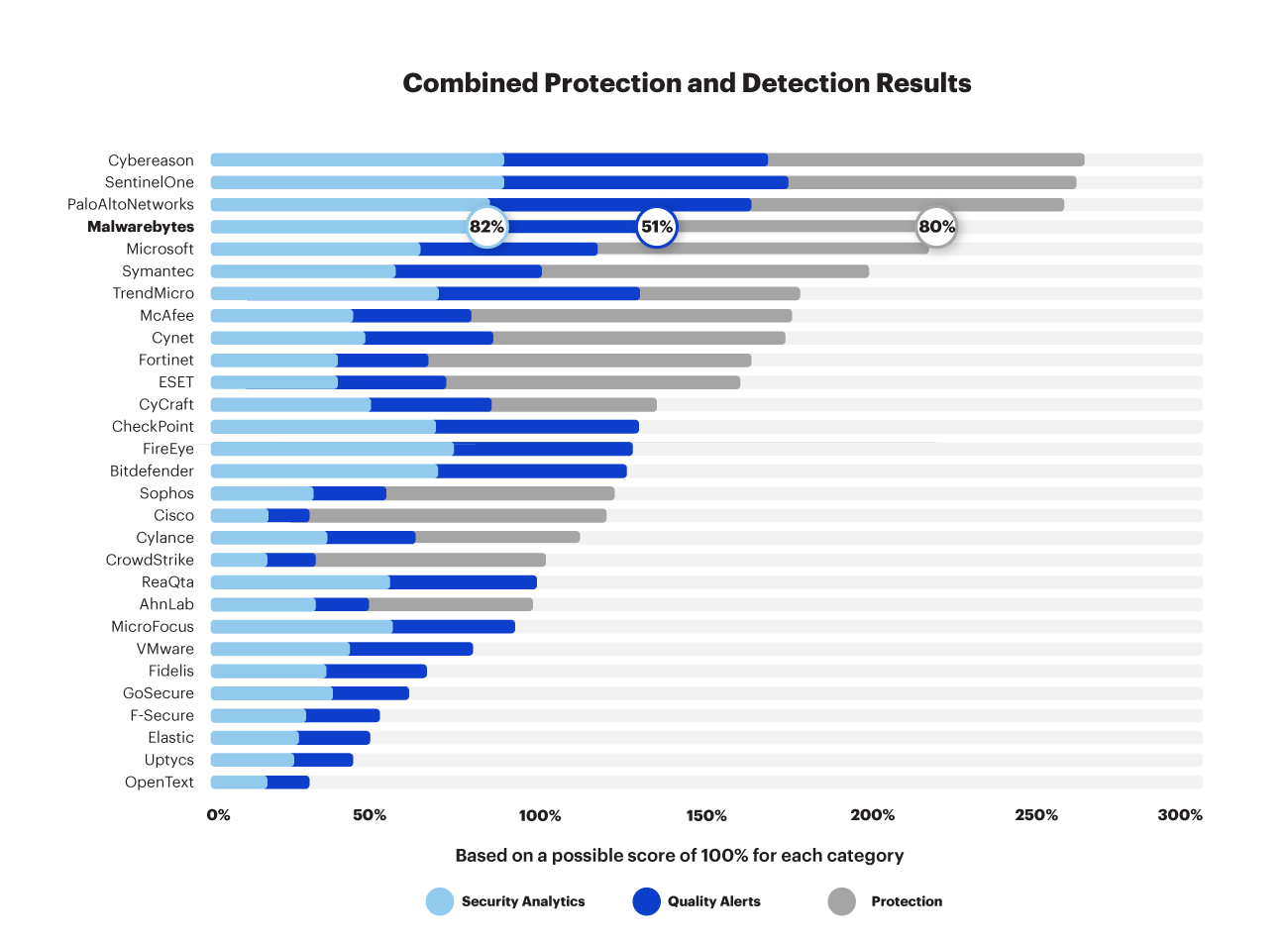

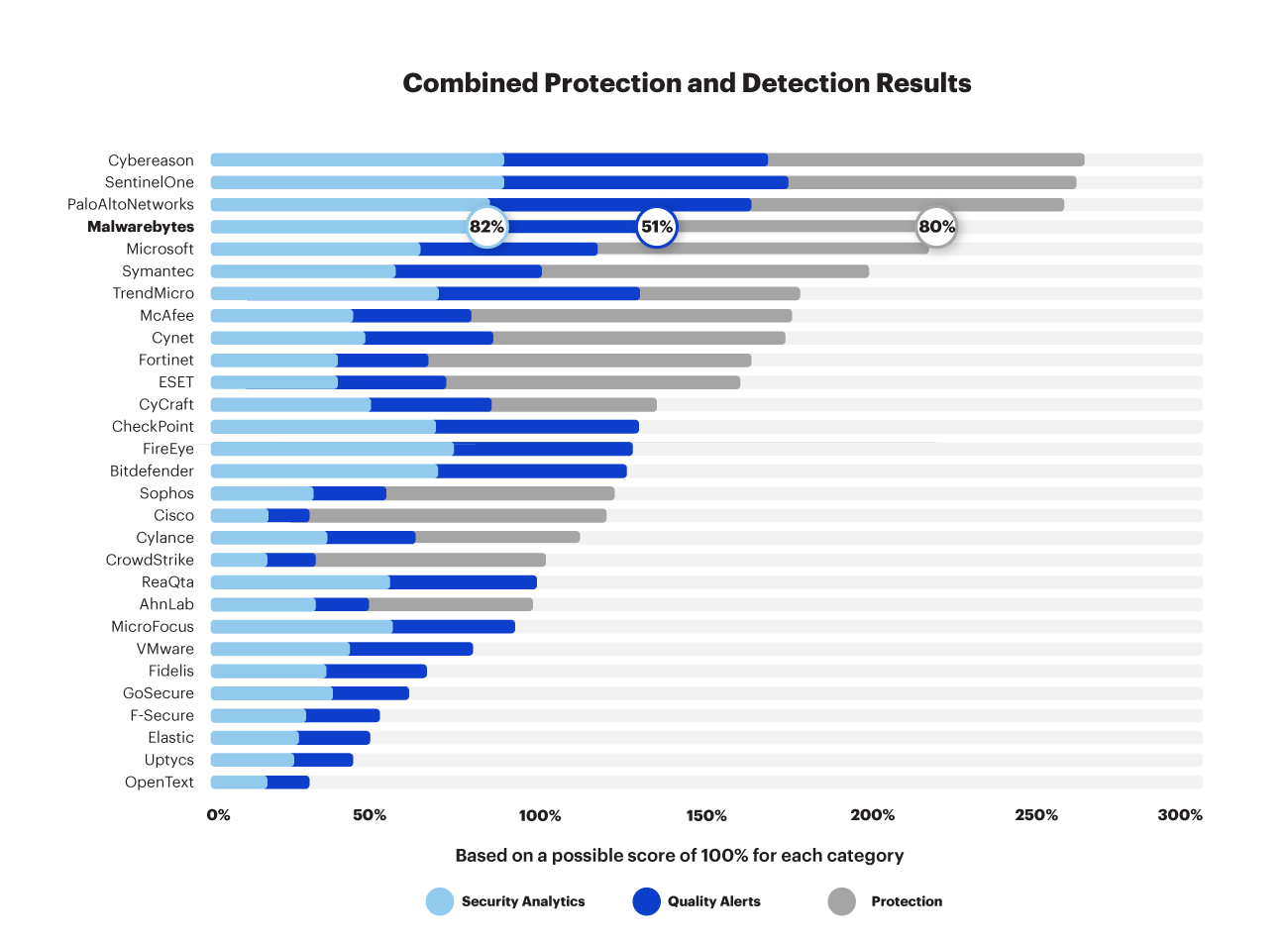

In the Round 3 evaluation, MITRE Engenuity also assessed protection capabilities. Let’s combine the protection and detection results to get the complete picture:

When viewed in context, Malwarebytes blocked eight out of 10 attacks on the earlier stages of the attack chain. Malwarebytes is not an EDR-only solution. It is a complete, integrated EP + EDR solution that provides multi-layered defense-in-depth for all types of modern cyberattacks, while remaining easy to use out of the box by organizations of all sizes.

We share this information to inform. Companies deserve to know exactly what they are buying when they purchase a cybersecurity solution, and they deserve to know how those solutions are tested—that includes the conditions, the circumstances, and the real-world applications of those tests.

At Malwarebytes we must be realistic about those real-world applications. For many businesses, cybersecurity is a set-it-and-forget-it product, and in-house SOCs and internal teams that can routinely adjust alert settings are luxuries. That’s just a fact, and it does not matter whether the cybersecurity industry likes or doesn’t like that fact—what matters is whether cybersecurity vendors are willing to honestly support their customers’ needs.